Modeling & Analytics

Modeling & Analytics is the quantitative pillar of Sustainable Catalyst—built for reproducible work, transparent assumptions, and outputs that can be reviewed and rerun.

The focus is not dashboards. It is decision-quality analysis with traceable inputs.

Principle: if a model changes, we should be able to explain what changed, why, and what it impacts.

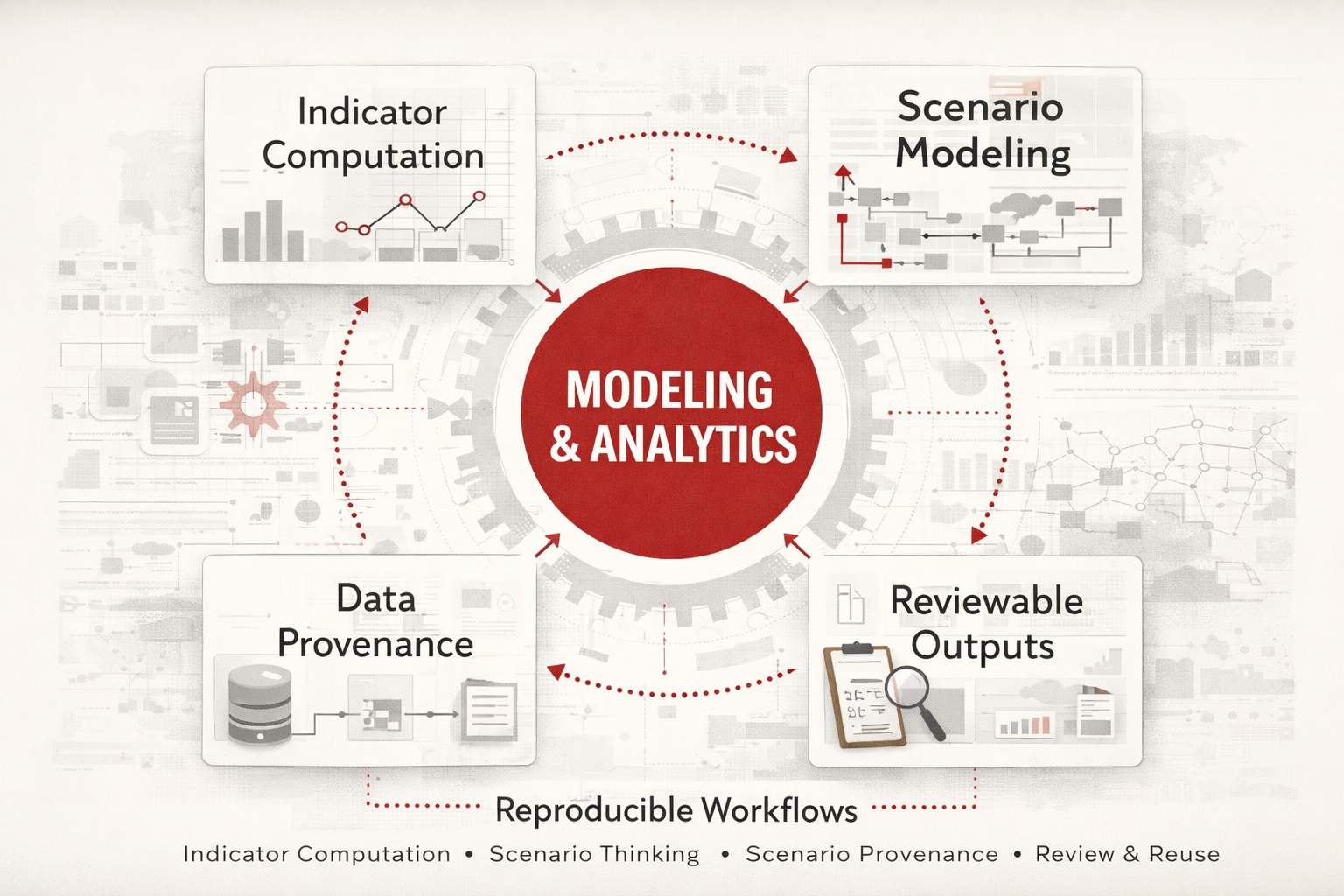

A visual framework for Modeling & Analytics, showing how reproducible workflows connect indicator computation, scenario thinking, provenance, and reviewable outputs.

What this pillar covers

This pillar focuses on how measurements are defined, computed, and interpreted.

It connects structured data — entities, sources, indicators, and periods — with reproducible analysis workflows.

- Measurement design — what to measure and how to define it

- Reproducible computation — methods that can be rerun and reviewed

- Scenario thinking — exploring choices and constraints without pretending to predict the future

- Transparent assumptions — documenting uncertainty rather than hiding it

Capabilities

-

Indicator computation

Define indicators with clear units, time periods, and methods—then compute them consistently across datasets.

-

Scenario modeling

Structured what-if analysis for planning and decision tradeoffs—without forcing false certainty.

-

Data provenance

Track where measurements came from, how they were transformed, and what definitions were used.

-

Reproducible exports

Produce outputs that can travel: tidy tables, documented methods, and stable schemas for reuse across projects.

Modules in this pillar

-

Catalyst Data

A shared SQL layer for entities, sources, indicators, and measurements—so analysis and narrative reference the same system of record.

-

Catalyst Analytics R

Reproducible analysis workflows for indicator computation, scenario work, and exports designed for review and reuse.

-

Catalyst Finance

Applied microeconomics and pricing analytics for decision quality—built around explicit assumptions and defensible reasoning.

-

Global Impact Catalyst

Indicator pipelines for SDG-style reporting, evaluation, and reproducible impact outputs.

Working style

If the model cannot be explained clearly, it is not finished.

If it cannot be rerun, it is not reliable. If the assumptions are not visible, it is not trustworthy.